March 2026

Why AI Coding Fails

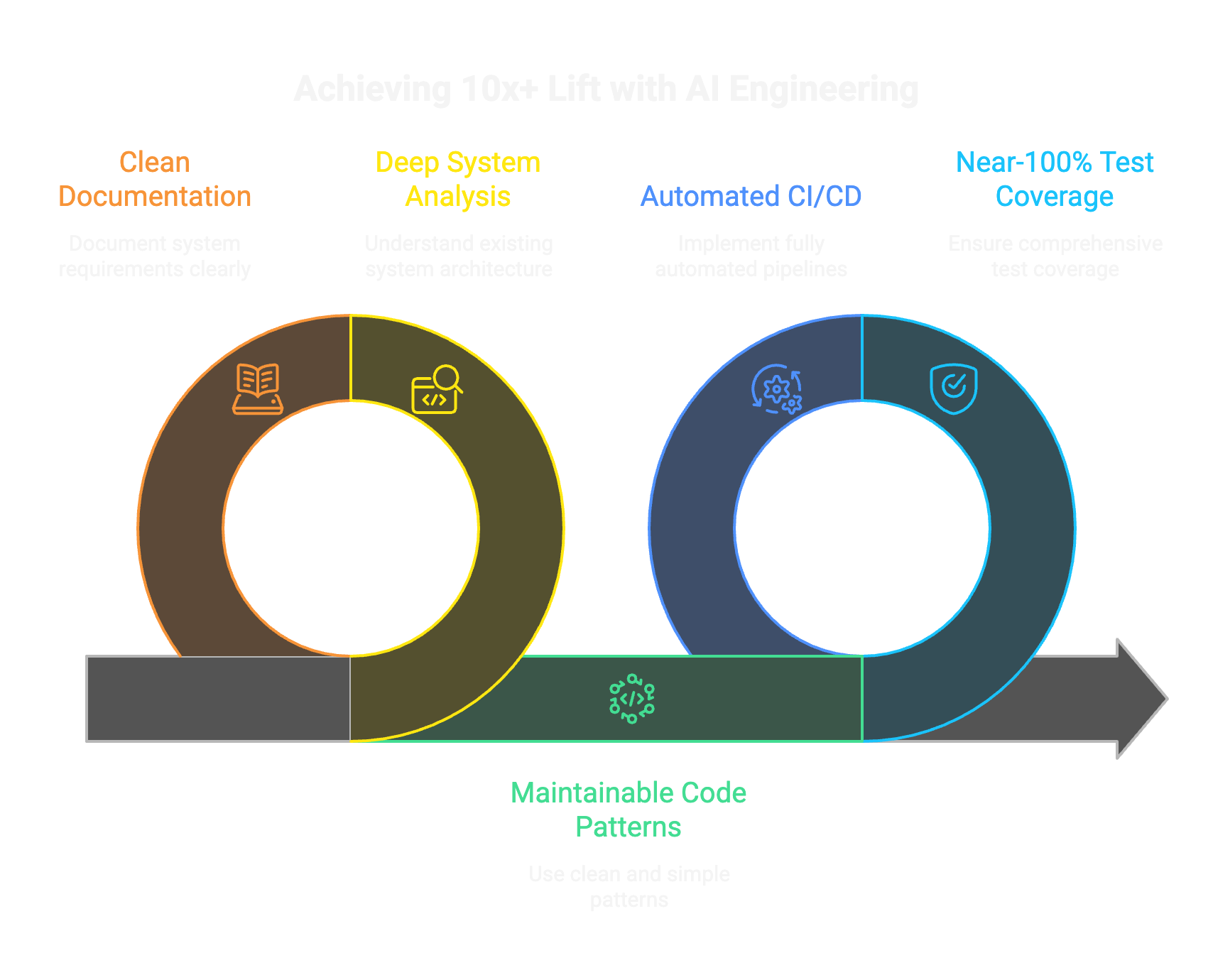

Because teams generate AI code without the right engineering process. We found success when we start with deepe analysis and clean documentation of existing systems, product requirement documents, clean and simple maintainable code patterns, near-100% test coverage, fully automated CI/CD pipelines, and other such rigor. This is still a 10x lift. A meaningfully net positive 10x+ lift.

We hear a version of this in almost every conversation: the team used AI tools to build an application. It went to production. Then they tried to add a new feature and it broke existing functionality, corrupted data, or both.

Or: someone built a proof-of-concept with AI in a few days. It worked well enough to go live. Now it is in production and nobody can safely change it.

We work in this space every day. We have spent decades building and running engineering teams at scale, and we now apply that experience through AI-assisted engineering across healthcare, pharma, manufacturing, and supply chain. The problems described above are real, we see them constantly, and they have specific causes that can be addressed. This article explains what we see, why it happens, and how we work differently.

Adding features to an AI-built system breaks things

This is the most common failure we hear about. A team builds an application with AI assistance. It goes live successfully. Then a second phase begins: new features, changed data models, additional integrations. And existing functionality starts failing in ways that are difficult to trace.

The mechanism is specific. When AI generates code without a deep understanding of the existing system, it builds against what it can see in the immediate context. It satisfies the request. But it creates implicit coupling to data structures, function signatures, and control flow that it did not fully map. When those are changed by a later feature, the original code breaks in ways the AI could not anticipate because it never had a complete picture of the system's invariants.

Without test coverage, those regressions are invisible until they reach production. Without architectural documentation, the developer adding the feature (human or AI) has no reliable way to know what will be affected by the change. The failure is not that AI wrote the code. The failure is that nothing in the process ensured the code was safe to build on.

The proof-of-concept went to production

The second pattern we see is closely related. AI tools generate working code fast. A team builds a prototype in days. Stakeholders see it, like it, and ask for it to go live. The team ships it. Now it is a production system that was never designed to be one.

Because the initial build was fast, there are no codified standards, no test suite, no documented architecture. Each subsequent change is a patch on the previous patch. The codebase becomes a layer of compensating fixes. Within weeks it is difficult to change safely, and the team is spending more time working around the system's fragility than building new capability.

This is not an AI-specific failure. Engineering organizations have pushed POCs to production for decades with the same outcome. AI compresses the timeline so it happens in weeks instead of months, which makes it feel like a new problem. The mechanism is the same: velocity without structural discipline produces systems that cannot evolve.

Why teams conclude AI tools are not ready

We understand why teams reach this conclusion. After a regression breaks production data, or a POC becomes an unmaintainable liability, the reasonable response is caution. Restricting or abandoning the tools makes sense as a short-term risk mitigation. We have seen organizations do this, and we respect the decision.

Where we disagree is the diagnosis. In every case we have examined, the failure traces to the engineering process around the tool, not to the tool itself. The same codebase, built with the same AI tools but with system analysis, test coverage, and codified standards in place, behaves differently. The difference is not the AI. It is the process.

How we approach AI-assisted engineering

Each step in our process exists because we have seen a specific class of failure when it is missing. Here is how we work, explained in terms of what it prevents.

Deep system analysis before any code changes

What this prevents: regressions caused by changes to a system the AI does not understand.

We direct AI agents to produce a full inventory of the existing system: architecture diagrams, dependency maps, data flow sequences, naming conventions, atypical patterns, configuration structures. The deliverables vary by system. A mainframe migration needs different documentation than a microservices refactor. We do not write code until we can explain the system's invariants, because those invariants are what new code must not violate.

Documentation organized for human review

What this prevents: building on an understanding that nobody has verified.

AI-generated analysis is only useful if engineers can read, challenge, and correct it. We structure the output for human consumption and review it carefully. We bring in subject-matter experts where the system's history or business logic requires context the code alone does not reveal. Errors caught here cost minutes. Errors caught in production cost days.

Feature definition with the same rigor

What this prevents: scope that drifts or conflicts with existing system behavior.

We define the new capability with the same thoroughness we applied to understanding the existing system. AI accelerates the research: exploring approaches, identifying edge cases, surfacing risks. The design decisions belong to engineers. The output is a specification we have read, understood, and are prepared to defend.

Implementation plan before implementation

What this prevents: AI-generated code that satisfies the request but violates system constraints.

We generate a sequenced plan: files affected, interfaces that must hold, data migration steps, rollback conditions. We review and challenge this before executing. The plan becomes the contract between our intent and the AI's output. Without it, the AI optimizes locally and breaks things globally.

Full test coverage as a structural requirement

What this prevents: the "adding features breaks things" problem. Directly.

We generate test suites at the unit level and as end-to-end unmocked integration tests. We spin up isolated test environments with infrastructure-as-code when the system requires it. If a future change breaks existing behavior, the test suite catches it before it reaches production. This is the single most important structural difference between AI-assisted engineering that works and AI-assisted engineering that produces the failures described at the top of this article.

AI validates its own output against the tests

What this prevents: hallucinated code, incorrect assumptions, and silent regressions shipping to production.

We direct the AI to run the full test suite, fix failures, and run again. By the time a human reviews the diff, it already passes a verification bar that most human-written code in legacy systems never had. The test loop is where the AI's speed becomes a genuine structural advantage: it can iterate through test failures faster than any human team.

by Anthropic

Steps 1 through 6 above are tool-agnostic. Steps 7 and 8 describe practices specific to Claude Code, the agentic coding tool we use in our engagements. Claude Code operates in the terminal, reads the full codebase, plans changes across multiple files, runs tests, and creates pull requests. It is not a code suggestion engine. It is a development workflow that understands project context.

Codified engineering standards

What this prevents: the accumulating-bandaids problem where each fix compensates for the last.

We specify our definition of clean code in configuration files that the AI reads on every interaction. Simple cohesive services, thin routes, fail-fast with no error swallowing, explicit over implicit. When every line of generated code follows the same conventions, the codebase stays navigable as it grows. Without codified standards, AI generates working code that follows no consistent pattern, and the system becomes opaque within weeks.

In Claude Code specifically, this means CLAUDE.md. It is a markdown file in the project root that Claude reads at the start of every session. Coding standards, naming conventions, architecture decisions, build commands, and review checklists go here. We keep ours under 200 lines because longer files reduce adherence. The key point: if the standards are not in CLAUDE.md, the AI does not know about them, and the generated code will reflect that absence.

Actively manage what the AI remembers

What this prevents: the AI accumulating stale or incorrect assumptions across sessions.

Claude Code maintains a memory system that persists across conversations. It stores learnings, patterns, and project context in ~/.claude/projects/<project>/memory/ with a MEMORY.md index file. Claude writes to this memory on its own initiative or when explicitly asked. The first 200 lines of MEMORY.md are loaded at the start of every conversation, which means stale or incorrect entries directly influence every subsequent session.

We regularly review these memory files, edit entries that contain outdated assumptions, and delete entries that no longer apply. This is the equivalent of correcting a team member's working notes before they start the next task. We have found this to be a meaningful contributor to output quality over multi-session engagements. Memory files are plain markdown, fully editable. The /memory command in Claude Code lets you view and manage them. We have also found it useful to expose memory files directly in VS Code for continuous visibility into what the AI is carrying from session to session.

Engineering judgment does not automate

We have learned that AI-assisted engineering requires the same instinct a director of engineering develops managing a large team: knowing which area to dive deep into, which output to trust, and which to read line by line.

We run periodic code cleanup and tech debt clearing sessions. The debt accumulates faster because the velocity is higher. We know when to evaluate at the architecture level, when to read every line of a critical integration, and when to operate in between. That calibration comes from experience building and running engineering organizations, not from the tools themselves.

This is why we describe ourselves as AI-assisted engineers rather than AI developers. The AI writes code that engineers have specified, scoped, tested, and reviewed. The origin of the keystrokes matters less than the engineering process that governs them.

Compressed timelines change the methodology

When the full cycle from analysis through launch fits into days, the economics of methodology change. We can afford to be sequential and thorough. We can complete system analysis before starting design. We can complete design before writing code. We can generate full test coverage before shipping.

Agile emerged because traditional development cycles were long enough for requirements to drift. When cycles are measured in days, that drift does not have time to accumulate. Sequential rigor becomes practical. We use it.

Where this leaves us

The tools improve monthly. The organizational patterns around them are still forming. What we have described here is what works for us today, tested across engagements in healthcare, pharma, manufacturing, and supply chain.

We deliver completed customer value rapidly. That is not because we are vibe coders. We are AI-assisted engineers. Engineers first.

Frequently asked questions

"We built an app with AI and now adding features breaks things. Can this be fixed?"

Yes. The typical path is: first, generate a thorough test suite against the system's current behavior, so you have a regression safety net. Second, produce architectural documentation so that future changes can be made with an understanding of what they affect. Third, codify engineering standards so new code follows consistent patterns. Once those three pieces are in place, the system becomes safe to evolve. We have done this on AI-built systems and on decades-old legacy systems. The approach is the same.

"We tried AI tools and the code quality was poor. Why would this be different?"

In most cases we have examined, the quality issue traces to process. Specifically: the absence of codified standards for the AI to follow, the absence of tests for it to validate against, and the absence of human review with the same rigor applied to any other code contribution. When we onboard AI into an engagement, we establish all three before generating production code. The output quality reflects the process that governs it.

"This sounds like it requires senior engineers. What about the rest of the team?"

It does require senior engineering judgment, at minimum someone who can evaluate the output. But the artifacts this process creates (detailed documentation, test suites, codified standards) make the codebase more accessible to less experienced engineers than most legacy systems are today. A junior engineer working in a well-tested, well-documented AI-assisted codebase is more effective than the same engineer navigating an undocumented system with tribal knowledge.

"How do you deal with hallucinations?"

Tests and review. If the test suite covers all critical paths and the architecture is fail-fast, hallucinated code fails at the test boundary before it reaches production. Human developers make analogous errors constantly ("I assumed that API returned a list"), and the mitigation is identical. Thorough tests catch both. Absent tests catch neither.

"What about security and compliance?"

AI-generated code does carry higher security risk when unreviewed. Veracode's 2025 analysis found 2.74x more vulnerabilities than in human-written code. We mitigate this structurally: security requirements are codified in the AI's configuration, SAST and DAST run as part of the validation loop, and code review applies the same scrutiny regardless of who or what wrote the code. We work in regulated industries where compliance is not optional. Our process was developed in that context.

"Is this approach tied to a specific tool?"

No. The market includes GitHub Copilot, Cursor, Claude Code, and many others across modalities from IDE integrations to CLI agents. We have preferences, but the approach is tool-agnostic. The principles (understand the system first, document for humans, test thoroughly, codify standards, validate continuously) apply regardless of which tool generates the code.

"Can you help us fix an AI-built system that is already in production?"

Yes. The process is the same one we use for legacy modernization on any system. We start by understanding what the system does today: generating documentation, mapping dependencies, and building a test suite against current behavior. Once there is a regression safety net, we can refactor, add features, and evolve the system safely. The age of the code and who wrote it (human or AI) does not change the approach. The structural requirements for safe evolution are the same.