March 2026

From Empty Repository to Production in Three Months at a Pharmaceutical Manufacturer

How domain knowledge, engineering experience, and Anthropic's Claude Code compressed a regulated software deployment from the typical twelve months to under three. Delivered as Custom SaaS, not a project.

Three months from an empty repository to a production system at a pharmaceutical manufacturer. That timeline is not normal for this industry. Most enterprise software deployments in pharma take twelve to eighteen months. Some take longer.

We built a custom operational platform for i3 Pharmaceuticals, a specialty generic pharmaceutical company based in Warminster, Pennsylvania. i3 develops and manufactures complex solid oral dosage forms, including products like Posaconazole DR, Maraviroc, and Guanfacine. Their 150,000 square foot facility runs under full cGMP oversight, with FDA Prior Approval and GMP inspections.

This article explains how we delivered production software in under three months for a regulated pharmaceutical manufacturer, why we structured it as a Custom SaaS engagement instead of a traditional project, and how Anthropic's Claude Code changed the engineering economics.

Why three months is unusual in pharma

Pharmaceutical software deployments are slow for structural reasons, not just organizational ones.

The regulatory environment (FDA cGMP, 21 CFR Part 11, GxP) creates requirements that most software vendors and consultants discover during implementation rather than before it. Validation protocols, audit trail requirements, data integrity controls, role-based access, electronic signature compliance. These are not features you bolt on. They shape the architecture from the first commit.

Most consulting engagements spend the first three to six months in discovery. The vendor is learning what GMP means in practice, how batch records flow, what a deviation looks like, how QA sign-off works. They are learning the domain on the customer's timeline and the customer's budget.

We did not have that learning curve.

Three factors that compressed the timeline

1. We already knew the domain

Our team has worked inside pharmaceutical and healthcare organizations. GxP validation frameworks, 21 CFR Part 11 data integrity requirements, electronic batch records, LIMS workflows, deviation management, change control. We have operated in these environments, not just consulted for them.

When i3 described their operational constraints, we did not need a discovery phase to understand what they meant. We could map their requirements to architectural decisions in the first week. Validation-aware audit trails were in the initial data model. Role-based access aligned to their QA workflow was in the first sprint.

This is the difference between a team that has read about GMP compliance and a team that has built systems that survive FDA inspections. The former asks questions for three months. The latter writes code in the first week.

2. Decades of engineering in complex environments

Speed in regulated software is not about cutting corners. It is about making correct architectural decisions early so you do not have to redo them later.

Our engineers have built production systems across healthcare, manufacturing, financial services, and supply chain. We know how to structure a system that needs audit trails from day one, how to design for compliance without slowing down delivery, how to build multi-tenant architectures that isolate data correctly, and how to deploy to production environments where downtime has real operational consequences.

In the i3 engagement, this showed up as fewer false starts. We did not prototype three different data models before finding one that worked. We did not discover halfway through that our authentication model could not support the sign-off workflow QA needed. We did not have to rearchitect the audit trail after a compliance review.

We got the foundational decisions right early because we had made them before in similar environments.

3. Anthropic's Claude Code changed the engineering economics

Domain knowledge and engineering experience explain why we made correct decisions quickly. Claude Code explains why we executed on those decisions at a pace that would not have been possible two years ago.

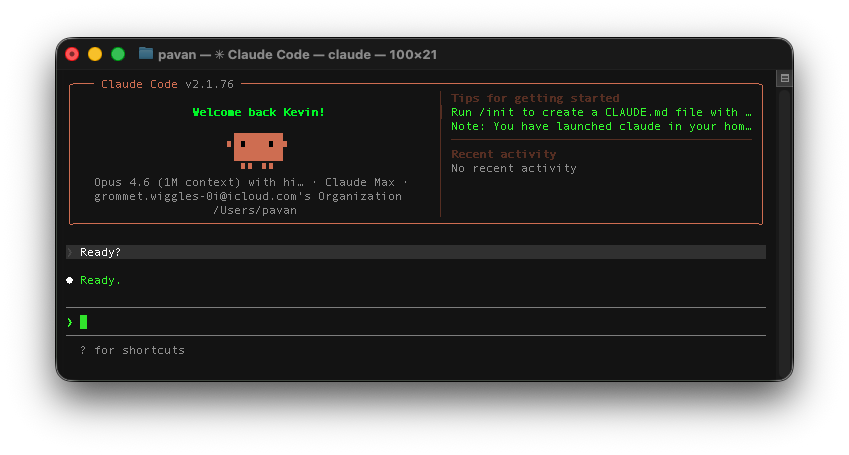

by Anthropic

Claude Code is an agentic coding tool that operates in the terminal, reads your full codebase, and executes multi-step development tasks through natural language. It plans changes, writes code across multiple files, runs tests, and creates pull requests. It is not a code suggestion engine. It is a development workflow that understands project context.

We used Claude Code throughout the i3 engagement. Here is what that looked like in practice.

Boilerplate and scaffolding. Regulated systems require significant structural code: audit trail middleware, role-based access control, input validation, error handling, logging. These are not creative engineering problems. They are well-understood patterns that need to be implemented correctly and consistently. Claude Code generated this code from specifications we provided, and our engineers reviewed and validated every line.

Test coverage. In a GxP-adjacent environment, test coverage is not optional. We used Claude Code to generate thorough test suites for each module, including edge cases that manual test writing often misses. Engineers reviewed the test logic and added domain-specific scenarios, but the baseline coverage came from Claude Code at a pace that would have taken significantly longer by hand.

Cross-file refactoring. As the system grew, we needed to restructure modules without breaking existing functionality. Claude Code operates on the full codebase context, which means it could trace dependencies across files, update interfaces consistently, and verify that changes did not introduce regressions. This kind of refactoring typically slows teams down mid-project. For us, it was routine.

Documentation. Compliance environments require documentation. API documentation, data flow descriptions, configuration guides. Claude Code generated first drafts from the codebase itself, which our engineers then reviewed and refined. This removed one of the typical bottlenecks in regulated software delivery.

How we used Claude Code safely in a regulated context

AI-generated code in a pharmaceutical context requires discipline. We did not use Claude Code as an autonomous agent. We used it as an accelerator under engineering supervision.

Every piece of generated code went through the same review process as human-written code. Pull requests were reviewed by senior engineers who understood both the technical implementation and the regulatory context. Automated test suites validated behavior. Integration tests ran against the full system before any merge.

The model we followed: Claude Code proposes, engineers verify, tests validate, reviewers approve. No generated code reached production without human review and automated verification.

This is important because the risk in AI-assisted engineering is not that the AI writes bad code. The risk is that teams trust it without verification. We did not change our quality bar. We changed our throughput at that bar.

Near-complete automated test coverage as an operational requirement

We are approaching 100% automated test coverage on this system. That is not a vanity metric. It is an operational necessity for how we work.

The Custom SaaS model means we continuously improve the platform based on customer feedback and our own observations of how the system is being used. i3's team uses the system daily. They tell us what is working, what is awkward, what they need next. We respond with code changes, sometimes within the same week. That cadence of change is only sustainable if we can verify that every modification does not break existing behavior.

Claude Code is central to maintaining that coverage. When we build a new feature, Claude Code generates the corresponding test suite. When we refactor existing code, it updates tests to reflect the new structure. When we fix a bug, it writes a regression test that captures the failure mode. Engineers review every test for correctness and domain relevance, but the generation speed means test coverage does not lag behind feature delivery.

In a regulated environment, this matters beyond engineering convenience. These test suites serve as executable documentation of system behavior. They demonstrate that the system does what it is supposed to do, and they provide evidence that changes were verified before deployment. That is the kind of traceability a cGMP environment requires.

Integrating with the manufacturing floor and warehouse

i3's operations run on their manufacturing floor and in their warehouse. Any system we build has to meet the work where it happens, not require the work to come to a screen in an office.

We are using OCR and other AI capabilities to plug into existing operational workflows with minimal disruption. The goal is to capture data from the processes and documents that already exist, in the formats they already exist in, rather than asking operators to change how they work to feed a new system.

This is a deliberate design decision. Pharmaceutical manufacturing environments have established procedures. Operators follow SOPs that have been validated and approved. Introducing a new digital system that requires significant changes to those procedures creates regulatory risk, training overhead, and resistance. The better approach is to layer digital capabilities on top of existing workflows and let the system adapt to the operation, not the other way around.

Change management as an engineering responsibility

Software that nobody adopts delivers no value. We have seen this pattern in regulated industries repeatedly: a technically sound system gets deployed and then sits underutilized because the team that built it did not think carefully about how people actually work.

At i3, we thought through change management from the beginning. How do team members move through their daily routines on the manufacturing floor and in the warehouse? Which SOPs will be affected by the new system, and how? Where can we deliver value immediately without requiring anyone to learn a new process? Where do we need training, and what is the lightest-weight version of that training that still works?

We mapped the operational workflows before designing the user workflows. We identified the points where the system could reduce effort without changing the sequence of steps people already follow. We designed the interface so that the most common tasks require the fewest actions. We deployed incrementally, starting with the functions that provided the most immediate value and the least disruption.

This is not a separate workstream from the engineering. It is part of it. A system that requires extensive change management to adopt is a system that was designed without sufficient understanding of the operating environment. Claude Code gave us speed in writing code. Domain empathy determined whether that code would actually be used.

Engineering speed is necessary but not sufficient

Claude Code enabled us to write, test, and deploy code at a pace that compressed the three-month timeline. But speed alone does not explain why the system was adopted and is being used daily.

The engineering had to be right: correct architecture, proper compliance controls, full test coverage. The integration had to be right: OCR and AI that captured data from existing processes without requiring workflow changes. The change management had to be right: thoughtful design that respected how people work and minimized friction.

We see this consistently across our engagements in regulated industries. The technology consulting industry tends to treat engineering, integration, and adoption as separate concerns, often handled by separate teams. In practice, they are coupled. A system that is well-engineered but poorly integrated creates data entry overhead. A system that is well-integrated but poorly adopted sits unused. A system that is adopted but poorly tested becomes a compliance liability.

Delivering real value to a customer like i3 required getting all three right, at the same time, at a pace that kept the engagement economically viable. That is what we did.

Why we structured this as Custom SaaS, not a project

A traditional software engagement for a company like i3 would have looked like this: a large upfront CapEx investment, a multi-month requirements and procurement process, a fixed-scope delivery, and then a separate conversation about maintenance and support.

We did something different.

We built the platform as a Custom SaaS offering. i3 subscribes to the system as an operational expense. We own, operate, and continuously improve the platform. They pay a predictable monthly cost. No large capital outlay. No depreciation schedule. No procurement committee.

This changed the economics in several ways.

Traditional Project Model

- Large upfront CapEx investment

- Multi-month procurement process

- Fixed scope, change orders for additions

- Asset depreciates after delivery

- Separate maintenance contract

- Customer owns and operates the system

Custom SaaS Model

- No upfront capital investment

- Simplified procurement (OpEx subscription)

- Continuous improvement included

- Platform improves over time

- Maintenance, hosting, and support included

- We own and operate the system

For a specialty pharmaceutical company with approximately 50 employees, this model removes meaningful friction. The finance team does not need to justify a large capital expenditure. The operations team does not need to build internal capacity to maintain custom software. The platform improves over time without requiring i3 to manage a development team or negotiate change orders.

The Custom SaaS model also aligned incentives correctly. Because we operate the system, we are motivated to build it well. Operational problems are our problems. Uptime is our responsibility. This is different from a project model where the vendor's incentive ends at delivery.

What we delivered

The production system went live in under three months. It supports i3's operational workflows with full audit trail capabilities, role-based access control, and data integrity controls appropriate for a cGMP environment.

We are not disclosing the specific functionality of the system, consistent with our confidentiality obligations. What we can say is that it addressed real operational bottlenecks that i3 had been managing through manual processes, and that it was adopted by the operations team without the extended change management effort that enterprise software typically requires.

The system is in production. It is being used daily. We continue to operate and improve it.

What this engagement demonstrates

The three-month timeline was not an accident or an anomaly. It was the result of three factors that compound: domain knowledge that eliminates discovery overhead, engineering experience that reduces architectural rework, and AI-assisted engineering that increases implementation throughput without lowering the quality bar.

Remove any one of those three factors and the timeline extends. Without domain knowledge, you spend months learning what GMP compliance means in practice. Without engineering experience, you make foundational mistakes that require rework. Without Claude Code, you execute at a pace that makes the three-month timeline impractical even with the first two factors.

The Custom SaaS model removed the commercial friction. No large CapEx approval. No extended procurement cycle. No fixed-scope negotiation. i3 got a production system they could start using, and we got a long-term operational relationship where our incentives are aligned with the system's performance.

This is the model we believe regulated industries need. Not cheaper consultants. Not faster vendors. A combination of real domain depth, serious engineering capability, responsible AI-assisted development, and a commercial structure that aligns incentives over the long term.